Iterative revisions as part of assessment design

Learner feedback and performance identifies revision opportunities

By Caitlin Friend, PhD, MITx Digital Learning Lab Fellow, Biology and

Darcy Gordon, PhD, Instructor of Blended and Online Learning Initiatives, MITx Digital Learning Scientist, Biology

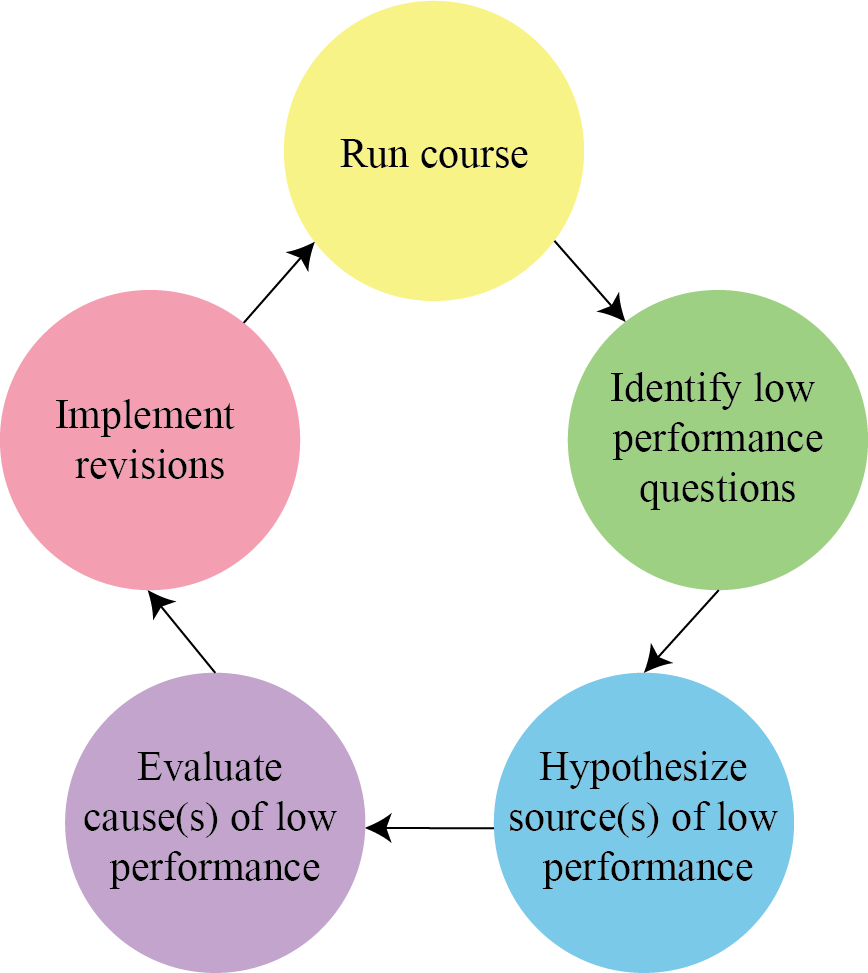

When a learner enrolls in one of MITx Biology’s online courses, they encounter a variety of assessments, constituting hundreds of problems, that test their understanding of the material. Despite our best efforts to design challenging but fair assessments — refined through extensive beta-testing during initial course development — there is always room for improvement. We recently designed a framework, published in the IEEE Learning with MOOCs proceeding, for revising assessments in online courses to provide a model for other course designers. We use our framework to continuously improve assessments by identifying opportunities to increase their effectiveness. Between runs of an online course, we use analytics and feedback from the previous cohort in an iterative revision process to refine the assessments. Through revision, we increase assessment effectiveness, cultivate problems that are optimized for specific learning goals, and save time by not designing new problems.

Using Learner Feedback to Identify Revision Opportunities

We use a number of strategies to identify problems for revision. During a given course run, we collect learner feedback from the discussion forum. Students use the forum to ask questions about problems that they find difficult or confusing, but they can also post about potential errors in assessment design. Problems that generate a lot of learner feedback are often good targets for revision.

However, we are always mindful to create a constructive educational experience, as learning occurs along a path that includes making and learning from mistakes. In some of our concept check problems that we call “test yourself questions,” learners can try until they arrive at the correct answer because our goals are for learners to practice, get quick feedback, and build confidence. We rely on learner feedback rather than performance to guide revisions for these problems.

Using Learner Performance to Identify Revision Opportunities

At the end of a course run, we analyze learner performance data to identify specific problems for potential revision. For this type of revision, we focus on mid-level assessments, (such as problem sets or quizzes) that require learners to synthesize multiple concepts with a limited number of attempts. With these assessments, we try not only to challenge our learners, but motivate them to strengthen their critical thinking skills, as these problems usually involve higher-order cognitive tasks such as application, analysis, and evaluation.

To streamline our process, we developed a framework to guide our assessment revisions. We flag problems where the average learner score is below 70%. We find this threshold accounts for random guessing while identifying whether learners can answer the problem based on the course material1,2. However, other instructors can set limits that make sense for their assessments and learning goals.

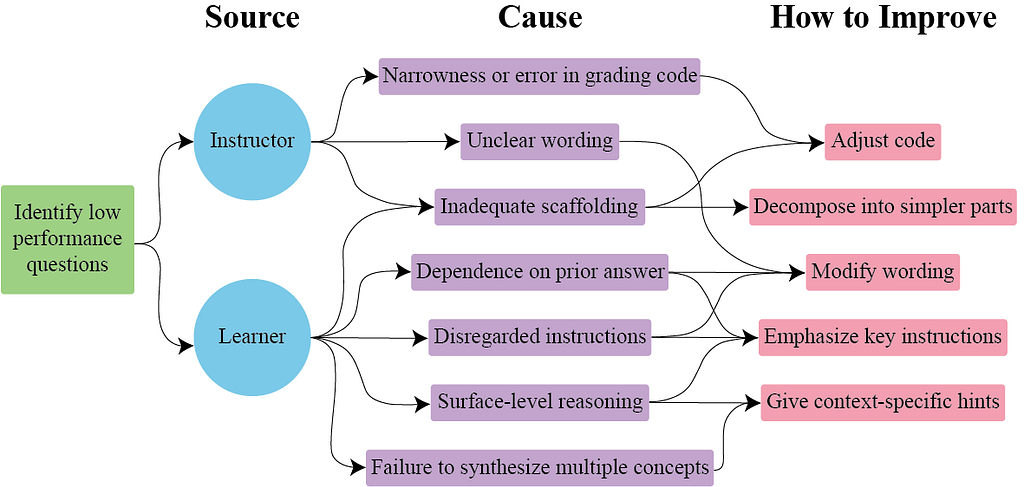

[Alt text for Figure 2: After identifying a low performance question, you hypothesize the source as either the instructor or the learner. A given question can have multiple sources or causes for low performance. Based on the source, there are a few potential causes of low performance to consider. Instructor-based causes are narrowness or error in grading code, unclear wording, and inadequate scaffolding. Learner-based causes are inadequate scaffolding, dependence on prior answer, disregarded instructions, surface-level reasoning, and failure to synthesize multiple concepts. Suggestions for how to improve a question during revision are based on the hypothesized cause. For narrowness or error in grading code you can adjust the code. For unclear wording you can modify the wording. For inadequate scaffolding you can adjust the code or decompose into simpler parts. For dependence on prior answer you can modify the wording or emphasize key instructions. For disregarded instructions you can modify the wording or emphasize key instructions. For surface-level reasoning you can emphasize key instructions or add context-specific hints. For failure to synthesize multiple concepts you can add context-specific hints.]

After identifying low-performance problems, we hypothesize why learners struggled to reach the correct answer. Although we are not privy to every learner’s thought process, our experience as learners ourselves informs our hypotheses about the sources (i.e. where mistakes come from) and causes (i.e. the kinds of mistakes) that lead to low performance on a given problem. Based on our hypotheses, we then make decisions on how to revise these problems.

Potential sources for low performance could be the learners, the instructor, or a combination of the two. When there is a mistake in problem construction, the instructor is the source. When a problem is correctly written but learners make mistakes while thinking through the answer, the learner is the source. In cases where the instructor and learner are both the source of low performance, learners should be able to correctly answer a problem that requires thinking through multiple cognitive steps, but the instructor has likely made the problem unnecessarily complex. In this example, we categorize the low-performance cause as “inadequate scaffolding.” We would revise this problem by breaking it up into multiple problems so learners can check their understanding throughout the process with separate submissions.

All Courses Can Benefit From Revisions

Reusing assessments has many benefits for the instructor and the learners, including less time writing new problems and optimizing educational effectiveness. Although we use our revision framework for online courses, this process can be applied to any type of assessment or course setting where learner performance data is gathered. To make your assessments as effective as possible, revisions between runs, especially the first few runs, will take your assessments to the next level.

Citation: C. M. Friend, M. Avello, M. E. Wiltrout and D. G. Gordon, “A Framework for Revision of MOOC Formative Assessments,” 2022 IEEE Learning with MOOCS (LWMOOCS), 2022, pp. 151–154, doi: 10.1109/LWMOOCS53067.2022.9927973.

Iterative revisions as part of assessment design was originally published in MIT Open Learning on Medium, where people are continuing the conversation by highlighting and responding to this story.